The structured approach aims to improve the clarity and maintainability of programs. The only three basic programming structures are used:

Two examples of tools are flow charts and pseudocode.

Pseudocode tends to be more useful for designing complex algorithms and it corresponds more closely to the iteration structures in a programming language.

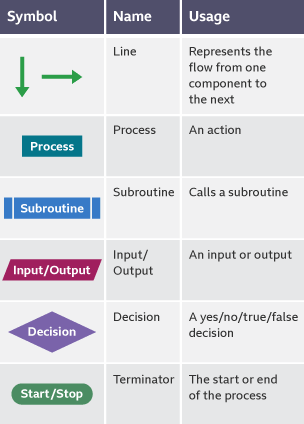

The different symbols for a flowchart can be found below:

Concurrent computing is defined as being related to but distinct from parallel computing.

Parallel Computing requires multiple processors each executing different instructions simultaneously, with the goal of speeding up computations. It is impossible on a single processor.

Concurrent Computing, on the other hand, takes place when several processes are running with each in turn being given a slice of processor time. This gives the appearance that several tasks are being performed simultaneously, even though only one processor is being used. This requires the use of processor scheduling algorithms.

Benefits and drawbacks of concurrent processing:

A problem is defined as being computable if there is an algorithm which can solve every instance of it in a finite number of steps.

Methods of problem solving:

Strategies of Problem solving:

Examples of this include binary trees

Backtracking is a methodical way of trying out different sequences until you find one that leads to a solution. Solving a maze i a typical one of this kind

The process of digging through big data sets to discover hidden connections and predict future trends. Big data is the term used for large data sets that cannot be easily handled in a traditional database.

These are problems for which an algorithm may exist for their solution but it would take an unreasonably long time to find the solution. An example of which is the traveling salesman problem.

An approach to problem solving which employs an algorithm not guaranteed to be perfect, but is adequate. This may be achieved by trading optimality, completeness, accuracy or precision for speed.

The process of simulating different user and system loads on a computer using a mathematical approximation, rather than doing actual performance testing which could be difficult and expensive

The technique of splitting tasks into smaller parts and overlapping the processing of each part of the task. It is commonly used in microprocessors.